A team of university researchers has devised a new optical-acoustic side channel attack dubbed ‘Side Eye,’ which can extract ambient sound from the environment at the time an image was taken.

Considering that videos are a string of frames (still images), a rogue attacker could potentially use this method to record continuous sound from a room without actually recording with the microphone or explicitly filming video.

This could be achieved through a malicious app (malware) that only has access to the camera, points away from the speaker, and/or the device’s owner has physically disabled the microphone for security reasons.

arxiv.org

How the attack works

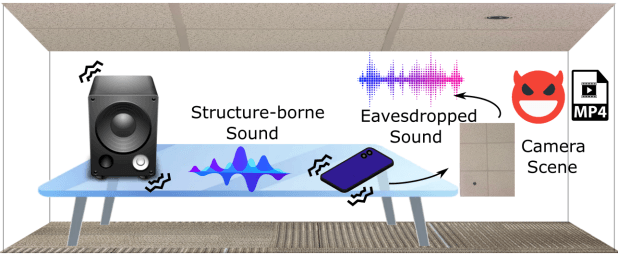

Smartphone cameras, due to their rolling shutter and movable lens structures, can unintentionally modulate from the subtle sound wave vibrations that come from the surrounding environment. These vibrations affect the movement of the hardware, particularly the lens and the rolling shutter, causing identifiable distortions in the produced images, which however, remain imperceptible to the human eye.

This phenomenon gets even more amplified when smartphone cameras have certain features like CMOS rolling shutters, Optical Image Stabilization (OIS), and Auto Focus (AF), making the distortions more pronounced and easy to use in analysis.

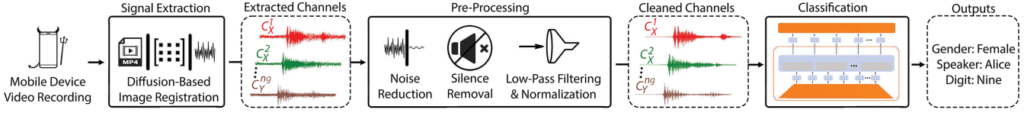

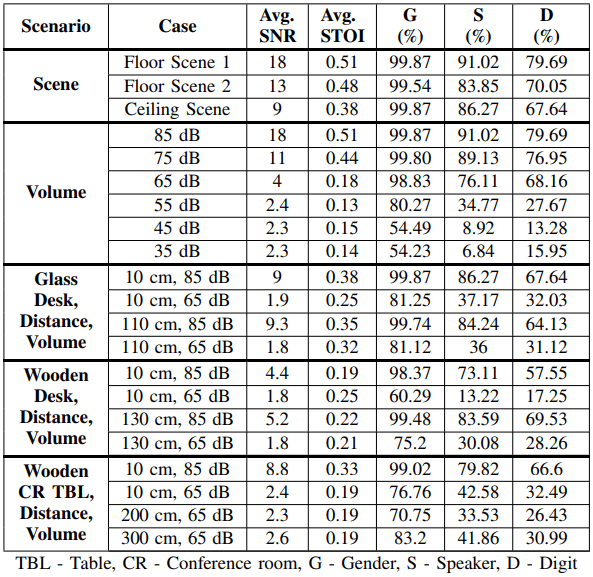

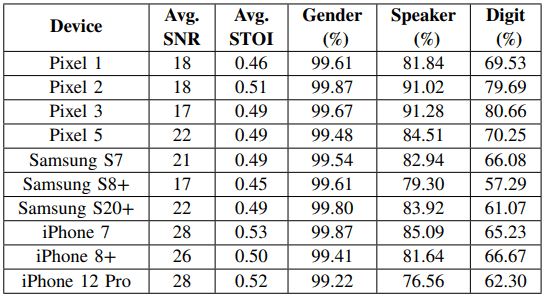

The university researchers have devised a software-based method to transform these image distortions into sound waves. Their testing on ten different smartphone models showed that SideEye can extract audio with identifiable spoken digits at an accuracy that surpasses 80.6%, identify individual speakers 91.3% of the time (from a group of 20), and discern speaker genders at an accuracy of 99.7%.

arxiv.org

The attack works best when the phone and the sound source (i.e., a speaker) share the same surface.

arxiv.org

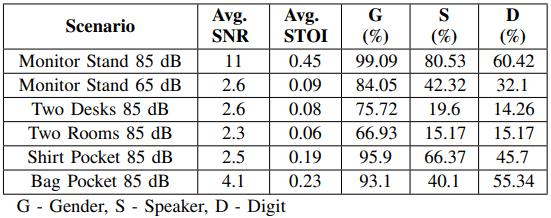

However, Side Eye can still produce usable data in more covert scenarios. These conditions, however, are far from ideal, inevitably reducing the sound propagation, and by extension, the image modulation.

arxiv.org

It becomes clear from the above that, with enough effort, Side Eye could lead to disclosures of conversations, information about people present in a room but not in the image or video frame, deduction of location based on other acoustic fingerprints like airplane or train passes, etc. This is essentially covert surveillance/espionage, which could lead to blackmail, among other potential scenarios.

arxiv.org

Is Side Eye a real threat?

Many attack concepts of this kind are limited to the theoretical realm. However, documenting them is crucial given the accelerated technological progress across various domains constantly opening up new practical capabilities.

For Side Eye to work, a mechanical path that accommodates sound wave propagation is required, which further limits the scenarios of rogue exploitation. The researchers have noted in their paper that air-borne propagation alone does not cause detectable modulation in present-day smartphone camera systems. That said, there are no immediate risks of mass exploitation.

While traditional acoustic eavesdropping and side-channel attacks have significant distance and data rate constraints, making them challenging to execute, the rise of accessible machine-learning models could make these attacks more feasible.

Side Eye seems like a very specific attack that could be employed in particular scenarios involving narrow intelligence-collection scopes. However, if the attack mechanisms are optimized for implementation on a large enough scale, it could become a bane to the privacy of millions of people. Unlike ephemeral data, images and videos can be preserved for long. This means that photos and videos taken today or in the past can be revisited and analyzed years later, when technology has advanced, to reveal private information that is currently undetectable.

RansomedVC Claims Attack Against Sony Corporation, Leaks Data

RansomedVC Claims Attack Against Sony Corporation, Leaks Data

Leave a Reply